May 05, 2026 - Today at the Sustainability Conference for Responsible Research Computing (SC4RC) hosted at CERN in Geneva, I had the pleasure of delivering the opening keynote presentation. In the talk titled “Faster, Greener, Precise Enough: Challenges and Directions in GPU Auto-Tuning”, I gave an overview of the research that the Accelerated Computing research group has been doing in recent years developing technologies to make GPU computing more sustainable. Below is a brief overview of the contents of the talk.

The cornerstone of modern computing platforms is the Graphics Processing Unit (GPU), providing nearly all compute power, and consuming a majority of all electricity, in modern supercomputers. Software for GPUs is created using specialized programming languages, such as CUDA for Nvidia GPUs or HIP for AMD GPUs, offering a relatively low level of abstraction with a great deal of control to the programmer to optimize for performance.

Modern GPUs are increasingly designed with AI as the primary application, with increased support for low-precision arithmetic and little improvement for high-precision often needed by HPC applications. This creates new opportunities for mixed-precision applications, while introducing another large programmability challenge, to efficiently utilizing modern GPUs.

The energy consumption of GPU applications greatly depends on how well the software is optimized to utilize the underlying hardware. In fact, sometimes the fastest implementation is not the most energy efficient, as energy consumption is more strongly influenced by memory traffic than by compute utilization. However, both built-in power sensors as well as state-of-the-art power meters often lack the accuracy and temporal granularity needed for large-scale exploration of GPU optimization strategies.

In general, optimizing the performance of GPU applications requires exploration of vast and discontinuous program design spaces, which is an infeasible task for developers to do manually. Automatic performance tuning (auto-tuning) is an effective and commonly applied technique for finding the optimal combination of algorithm, application, and hardware parameters to optimize the performance or energy efficiency of GPU applications.

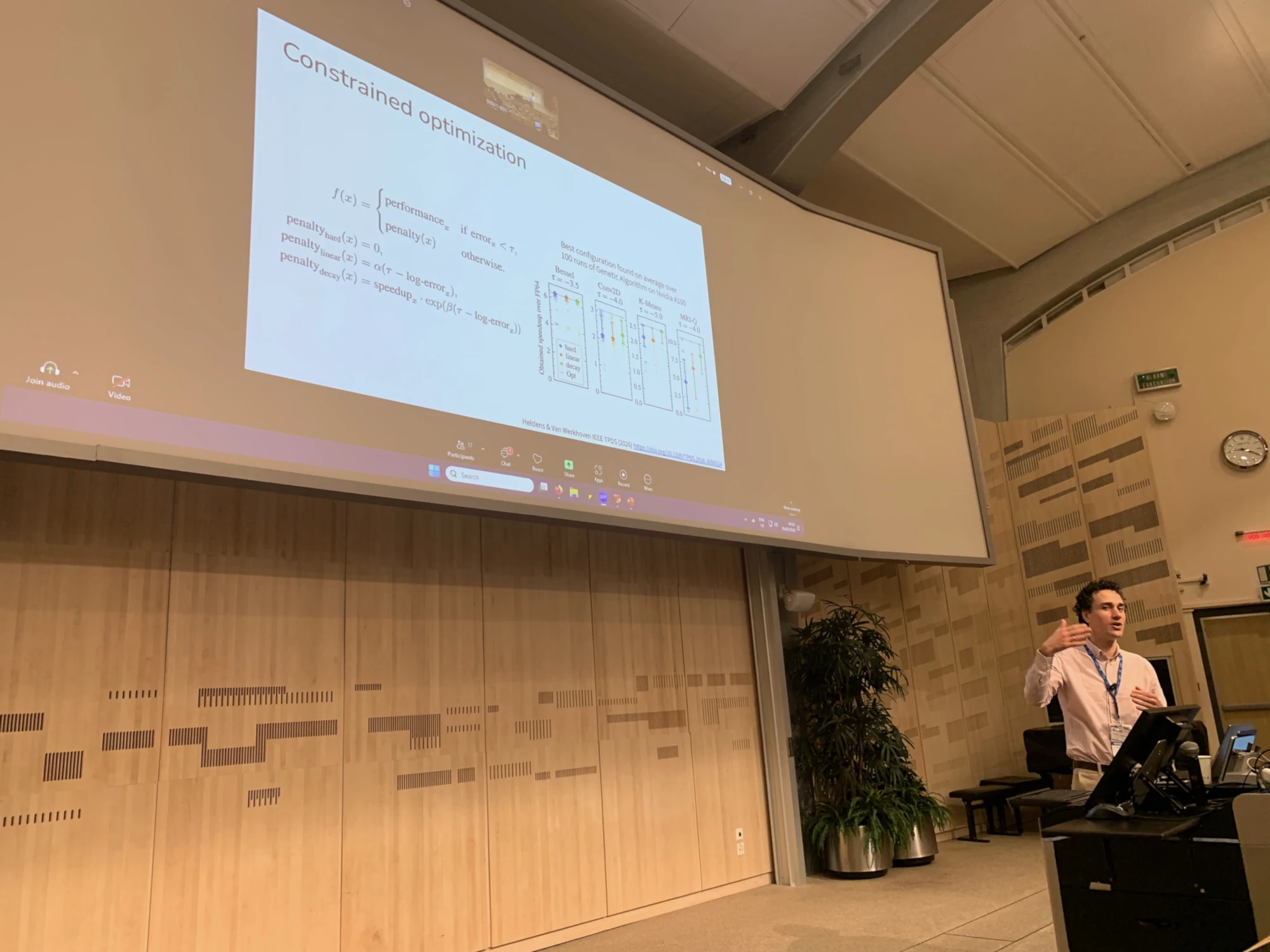

To respond to the emerging challenges of mixed-precision computing and energy efficiency, the field of auto-tuning is now looking towards constrained and multi-objective optimization algorithms that will allow automatic exploration of the trade-offs between performance, energy consumption, and numerical accuracy. In this talk, I will highlight some of the key challenges, latest developments, and future plans in GPU auto-tuning.

The slides used in the presentation can be found here.